The word doing the work

At Google Cloud Next 26, two customer quotes sat fifteen minutes apart in the same press release. They described opposite trajectories under the same word.

Google Cloud Next 26 ran from 22 April in Las Vegas. Thomas Kurian titled the keynote The Agentic Cloud. The list of announcements was long and most of it was legit.1TPU 8t scales to 9,600 chips and 2 PB high-bandwidth memory per superpod. TPU 8i connects 1,152 chips per pod. Ironwood delivers 4.6 petaFLOPS per chip, scaling to 42.5 exaFLOPS in a 9,216-chip superpod. Customer endorsements from Unilever, Citadel Securities, Mattel, Intuit, McDonald's, NASA Artemis II, L'Oréal, and Burns & McDonnell.

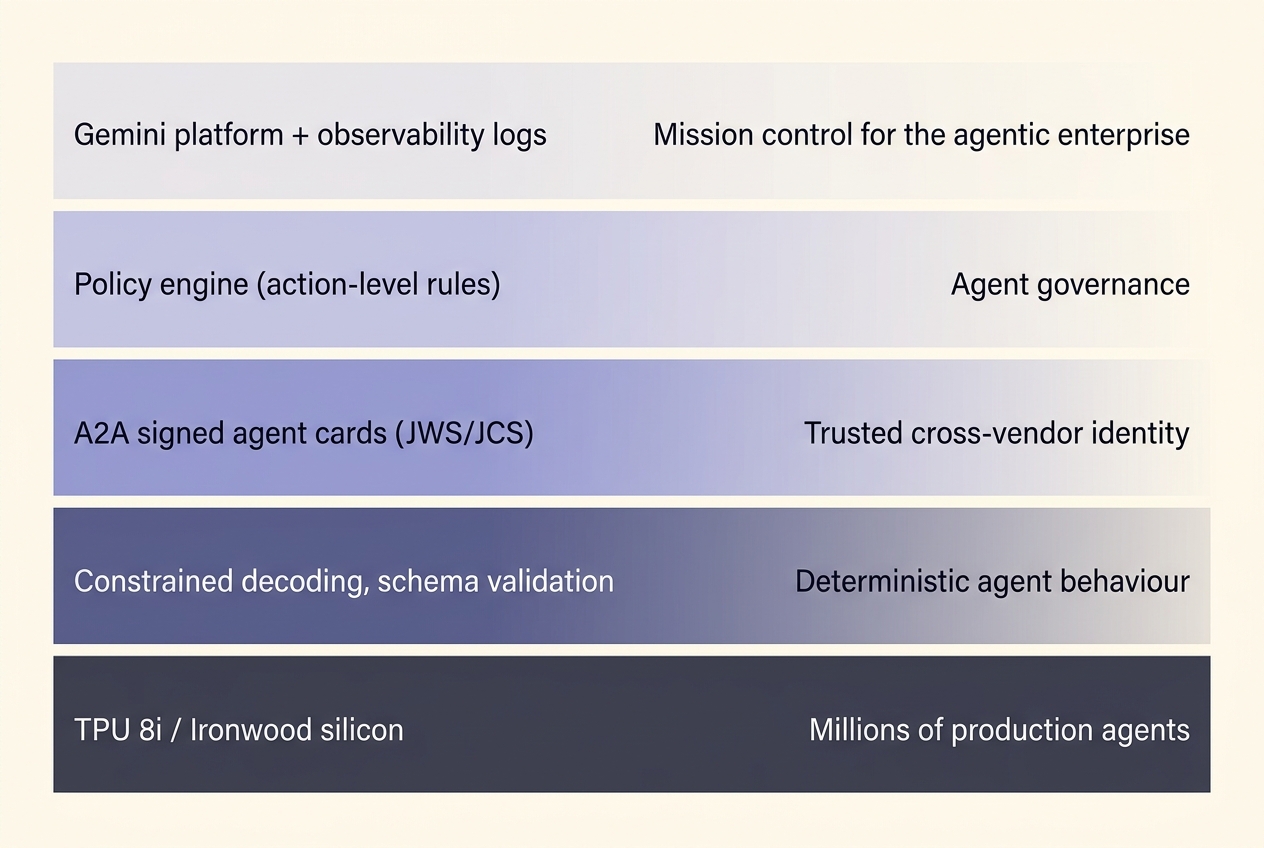

- Gemini Enterprise Agent Platform replaces Vertex AI as the unified control plane for building and running agents

- TPU 8t for training, TPU 8i for inference, pitched as the enabler for running millions of agents concurrently

- Anthropic committed to up to a million previous-generation Ironwood units

- A2A v1.0 went to production across 150 organisations

- ADK reached v1.0 across four languages

- Model Garden grew past 200 models, including Claude

- Workspace Studio brought no-code agent building to the productivity surface

- Pichai claimed 75% of new code at Google is now AI-generated

- 2026 capex: $175–185 billion, up from $32 billion in 2022

- Audience: 32,000. Marketing line: mission control for the agentic enterprise

I read the announcements over a couple of days, the way I read most of these. Something stopped me halfway through.

The word

In the same press release, two customer quotes sat fifteen minutes apart. The CIO of L'Oréal described his direction of travel as moving from deterministic workflow automation to autonomous, outcome-oriented agent orchestration. Three slides earlier, the platform itself was described as supporting deterministic orchestration patterns so that agents could follow well-specified paths every time. Both quotes are verbatim from the same document, and they describe opposite trajectories under the same word.2The L'Oréal CIO described moving from deterministic workflow automation to autonomous, outcome-oriented agent orchestration. Burns & McDonnell described combining deterministic business rules with probabilistic reasoning. Both from the same press release. One uses deterministic to name what they are escaping; the other uses it to name what they have built on.

That was where the words and the architecture stopped lining up. Once I had noticed, the same shape kept showing up across the keynote.

Two jobs, one word

The word deterministic was being asked to do two completely different jobs in the same press release, and the press release moved between them without flagging the change. In the engineering layer of the platform, deterministic means the routing graph between agents is fixed, the policy rules are evaluated by code rather than inferred by a model, and the API calls to systems of record are predictable. The control flow is locked down. The picture is a flowchart, with agents at the nodes. In the L'Oréal quote, deterministic means the boring, slow, hardcoded automation that companies have lived with for thirty years. The thing they want to escape. The CIO is announcing his move toward the opposite of that, toward agents that decide for themselves what step comes next.

The word covers both senses, used for the platform's strength and for the customer's escape from that strength, sitting two paragraphs apart in a press release that treats both endorsements as if they reinforced each other.

The skeleton

A large language model, by construction, samples from a probability distribution over tokens. Set the temperature above zero, ask the same question twice, and you get two different answers. The mechanism that makes these models useful for language is the same mechanism that makes them non-deterministic at the output layer. You cannot make an LLM deterministic without lobotomising the thing that made you want to use it.3Temperature above zero means the same input produces different outputs across invocations. Microsoft's Agent Governance Toolkit documentation states this directly: regulators in finance, healthcare and consumer credit require deterministic, auditable decision rationale, so the recommended pattern is to exit to a deterministic workflow, leaving the LLM as a probabilistic component inside it. What you can do is build a deterministic skeleton around it. You can hardcode the routing graph. You can put a policy engine in front of every action the agent tries to take. You can validate every output against a JSON schema. You can force the model, at decoding time, to produce only tokens that conform to a specified shape. Microsoft's open-source toolkit, released two weeks before the Google event, does exactly this and is unusually honest about its scope.4The Agent Governance Toolkit (MIT licence, 2 April 2026) ships seven packages across Python, TypeScript, Rust, Go, and .NET. Agent OS intercepts every agent action at sub-millisecond p99 latency. The README states the limit plainly: the policy engine and agents run in the same process; AGT does not provide OS kernel-level isolation. The trust score (0–1000) tracks behavioural reputation from policy compliance, not semantic correctness. The architecture document says, in plain words, that the policy engine governs agent actions, and that LLM inputs and outputs sit outside its remit.

Where the sale gets made

So when an enterprise vendor uses the word deterministic about an agent, what is actually being claimed is that the boundaries around the agent are predictable, while the agent itself, the part that does the language, the part that decides what to summarise and how to phrase a question and which tool to call, remains probabilistic. The marketing collapses these two things into one. The reader is invited to hear that the agent is deterministic, while the architecture is doing something more limited and less exciting. The phrasing is too useful to be accidental. The thing buyers are anxious about, the thing risk committees keep stalling on, is the probabilistic surface of these systems. They want to know the agent will do the same thing next time. The word deterministic answers that question while the architecture leaves it open, and the gap between the answer and the architecture is where the sale gets made.5Three constraint mechanisms are in production. Constrained decoding (OpenAI August 2024, Google I/O 2024, Anthropic November 2025) masks invalid tokens at each step. Post-generation validation via Pydantic or Zod retries on schema failure. Cryptographic identity at handoffs via A2A signed agent cards (JWS over JCS-canonicalised JSON, RFC 7515 / RFC 8785). The documented limit across all three vendors: schema enforcement covers structural validity; semantic correctness of the values requires separate verification.

The gap between the answer and the architecture is where the sale gets made.

The same trick

Millions of agents

Once I had this in mind, the same trick showed up around the millions of agents claim. Google announced that TPU 8i can concurrently run millions of agents cost-effectively. The chips can indeed run that many inference jobs in parallel, and whether any of those jobs add up to a working business is a different question entirely, answered by different evidence and not addressed by the silicon. The two claims share a noun and very little else, and a reader who is not paying close attention takes away the impression that millions of governed agents are about to start handling enterprise workflows reliably. The slippage between we built the chips and we built the working system sits in the same shape as the slippage between the routing is deterministic and the agent is deterministic. Both lean on the reader to translate from the headline claim to the actual artefact, and both count on the reader skipping that step.6The MAST taxonomy (NeurIPS 2025 spotlight) analysed 1,642 traces across seven open-source multi-agent frameworks. Failure distribution: specification problems 41.8%, coordination failures 36.9%, verification gaps 21.3%. A single agent at 99% per-step reliability across 10 steps yields 90.4% overall; at 95%, 77.4%. The authors' conclusion: base model improvements alone cannot address the full taxonomy.

Full stack, open standards

The same shape shows up at a higher level, in the central pitch of the entire keynote. Kurian framed Google's competitive case as owning the full stack from chip to inbox, with competitors handing you the pieces, not the platform. The argument is that vertical integration through Google's silicon, models, cloud and distribution beats best-of-breed assembly. In the same announcement, Google made a series of moves that point in the opposite direction. The Model Garden now hosts more than two hundred models, including Claude from Anthropic, who is at the same time committing to a million Ironwood units. A2A v1.0 went to production at 150 organisations, an open standard for cross-vendor agent communication. Managed MCP servers were added through Apigee. Cross-Cloud Lakehouse, on Apache Iceberg, lets customers query data sitting in AWS or Azure without copying it. Microsoft 365 interoperability was announced for Workspace.

Both stories are real, both have real engineering behind them, and they cannot both be the answer to the same question. Either the unified Google stack is the path to success, in which case the open-standard work is competing with itself, or the heterogeneous reality of enterprise IT is what most customers are actually buying for, in which case full stack from chip to inbox is a description of the sales pitch while the deployment pattern most customers want looks more open than that. The press release takes both positions in the same hour and treats them as a single coherent offer.

Each component does what it says, and the collective story being told over those components asks for more than the sum of them can support.

Every hyperscaler did some version of this in April. The pattern matters because it tells you something about how the agentic-AI conversation is currently being conducted, and about what gets slipped past the reader without a label. A signed agent card proves the agent's origin, which is useful for several purposes and silent on whether the agent will behave correctly. A policy engine blocks unauthorised actions, which is useful for the same kind of reason and equally silent on whether the authorised actions the agent chooses are the right ones. Real signed agent cards, real policy engines, real cryptographic identity, real integration with cloud-scale silicon. Every piece on the table works individually, but the claims made over them go further than the engineering supports.

The audit question

The piece that has stayed with me longest is the audit question. In the same week as the agentic platform launch, Deloitte announced a Google Cloud Agentic Transformation Practice. The same firm that will be hired to audit a client's deployment of agents is now formally a Google Cloud transformation partner with revenue tied to the volume of those agents in production. The arrangement was announced on the press wire, and the structural absurdity of it does not seem to have occurred to the people writing the announcement, which is its own data point about the conversation we are inside.7All Big Four firms (Deloitte, EY, KPMG, PwC) simultaneously maintain AI audit practices and AI deployment consulting partnerships with the same cloud providers. The EU AI Act allows internal (first-party) conformity assessment under Annex VI for most high-risk systems. Third-party assessment is required only in narrower categories. Auditor independence is identified in EU AI audit literature as an unresolved design consideration of the conformity assessment regime.

I keep trying to find a clean way to ask this. If you build the agents, and you sell the runtime that hosts the agents, and you write the audit log that records what the agents did, and you provide the observability tools that interpret the audit log, and the consultancy paid to certify that the agents behaved correctly is also paid to deploy more of those agents, who exactly is checking the work? The answer the announcement implies is that the system checks itself. Anyone who has worked inside a regulated industry will recognise that this is not how independent assurance works, and that calling something governance does not make it independent of the entity being governed.8DORA requires named human accountable owners for ICT third-party risk in financial services. AI Act Article 14 requires human oversight of high-risk AI systems as a continuing obligation throughout deployment; start-of-deployment approval alone is explicitly insufficient. The substrate-level cryptographic provenance gap, where no single operator can selectively rewrite the record, remains open as of April 2026.

I find I am angrier about this than I expected to be when I started writing. The engineering is real and the technology is interesting, and the claims being made on top of both keep getting ahead of them. The gap is where the next decade of enterprise spending will be either well-deployed or wasted, and the press releases are doing everything they can to make that gap invisible.

The pattern

Some of this is industry standard, and some of it is genuinely new. The millions of agents and deterministic orchestration and mission control for the agentic enterprise phrasing is new in the sense that we did not have agentic systems to oversell three years ago. The pattern is older. Every wave of enterprise software has had its own version of the trick, the word that does the work the architecture cannot. Big Data had real-time. Blockchain had immutable. AI had explainable. Each of those words pointed at something real in narrow circumstances, and got deployed in marketing so promiscuously that it lost the precision that made it useful in the first place. Deterministic is becoming the next entry on that list. Mission control is shaping up to be the one after.

Every wave of enterprise software has had its own version of the trick, the word that does the work the architecture cannot.

What a careful reader does

The question I am sitting with is what a careful reader is supposed to do. The honest answer is the boring one. Read the architecture documents, and read them after the press release rather than before. Read what the open-source projects say their tools do not do, because the limits sections are written by engineers and the press releases are written by communications. When two parts of the same announcement contradict each other, take the contradiction seriously rather than smoothing it over. Pay attention to who is auditing whom, and ask whether the auditor would still be paid if the answer came back wrong.

I happen to be testing parts of this directly through one of my own ventures, where the question of what cryptographic provenance looks like under heterogeneous agent deployments is a daily problem rather than a thought experiment. The argument here does not depend on that work. The view from inside it is that the language being used at the platform level is running ahead of the engineering being done at the substrate level, even as that engineering makes real progress.

What lingers from Cloud Next 26 is the way the same words do work in two directions at once: a vendor that is unified while its standards are open, agents that are deterministic while their workflows are autonomous, mission control that runs from the centre while the agents act on their own. Each pair is internally contradictory, presented as a single coherent offer to a buyer who is given no time to notice. The engineering inside the announcement is doing real and serious work, and the story being told over it has run ahead of that work. The word deterministic, on a Wednesday morning in April, was where the gap between them first opened up for me.