Your role is still safe

I have spent eighteen months building with LLMs daily. The structural reasons they work brilliantly for coding are the same reasons they fail at professional judgement in law, accounting, and every domain where the right answer lives outside the question you asked.

I have been working with large language models daily for about a year and a half. And before that, I worked with Machine and Deep learning systems for a few years.

Recently I had a long technical conversation with some friends about building a domain-aware agent for professional services — law, accounting, project management. We started with architecture and after a few iterations we had left the tool behind entirely and ended up at the structural limits of what these systems can and cannot do.

What that conversation crystallised is worth writing down, because I think the current panic about AI replacing professional roles is built on a misunderstanding of how these systems actually work under the surface.

The current limitations

Large language models are extraordinarily good at a narrow set of things. They parse natural language with remarkable accuracy. They translate between formats like a messy paragraph into structured data, a specification into code, a question into a search query. They summarise, draft, and accelerate the mechanical parts of knowledge work.

What they do not do, unless you explicitly tell them to, is challenge their own output. And believe me, prompt engineering is not a thing! More on that in the next article.

LLMs can search, compare against sources and have access to an indiscriminate, unvetted, enormous number of references. During the process of generating a response, however, each sequence of words that becomes a claim is not examined, verified, or grounded against anything. The model does not pause mid-sentence to ask whether what it is about to say is actually true. It does not explore the alternative avenues hidden from the path it is already on. It produces text that is statistically likely given the input, and moves on to the next token.

Vendors are trying to mitigate this by implementing multiple agents in a loop that attempt to validate each other. The deterministic harness that is starting to emerge and getting promoted by the same vendors is actually telling you what is not working with LLMs. And on this, I wrote already a couple of articles and will explore more about the effects of such mechanisms, where the solution is not a harness, but rather knowing when to use LLMs and when to avoid them.

After the digression, the statistical likelihood and factual accuracy of these LLMs are often correlated enough to appear impressive, and uncorrelated often enough to be dangerous. The gap between these two things is where every serious limitation lives.

Why it works for coding

The reason language models work so well for writing code is that coding sits in a rare sweet spot where the model's structural biases happen to point in a useful direction.

Code has verifiable ground truth. Tests pass or they fail, types check or they do not, the compiler accepts or rejects. When the model produces code, there is an objective check at the end that tells you whether the output is right. So, the model does not need to know if it is right, something or better someone else does and should.

Models are also trained on enormous codebases. The reasoning patterns that emerge (decompose the problem, define interfaces, match known patterns) do align with how good software engineering actually works. The model's instincts, for once, point toward correctness.

And the tool surface is universal and free, like filesystem, shell, git, package managers. Every developer has the same primitives, and the model has seen millions of examples of them being used well. With the result that the integration is clean because the environment is standardised.

The coding sweet spot: verifiable output (tests, types, compilers), aligned training (enormous codebases shaping the right instincts), and universal tooling (filesystem, shell, git). This combination is specific to coding. It is the exception, and understanding why it works so well is the first step toward understanding why everything else does not.

How it actually works underneath

The standard explanation that LLMs predict the next token is fairly accurate but hides what actually matters.

Let me explain why. A large language model starts as a random matrix with billions of parameters, initialised with noise, spread across hundreds of layers. Training data adjusts those parameters using deep learning to find statistical patterns in an enormous corpus of text. Given an input, what comes out the other end is a system that navigates through those layers toward an output. The width of the path expresses how deterministic or creative the model behaves. This is controlled at inference time by a parameter called temperature, which decides how much the model sticks to the highest-probability next word or explores less likely alternatives.

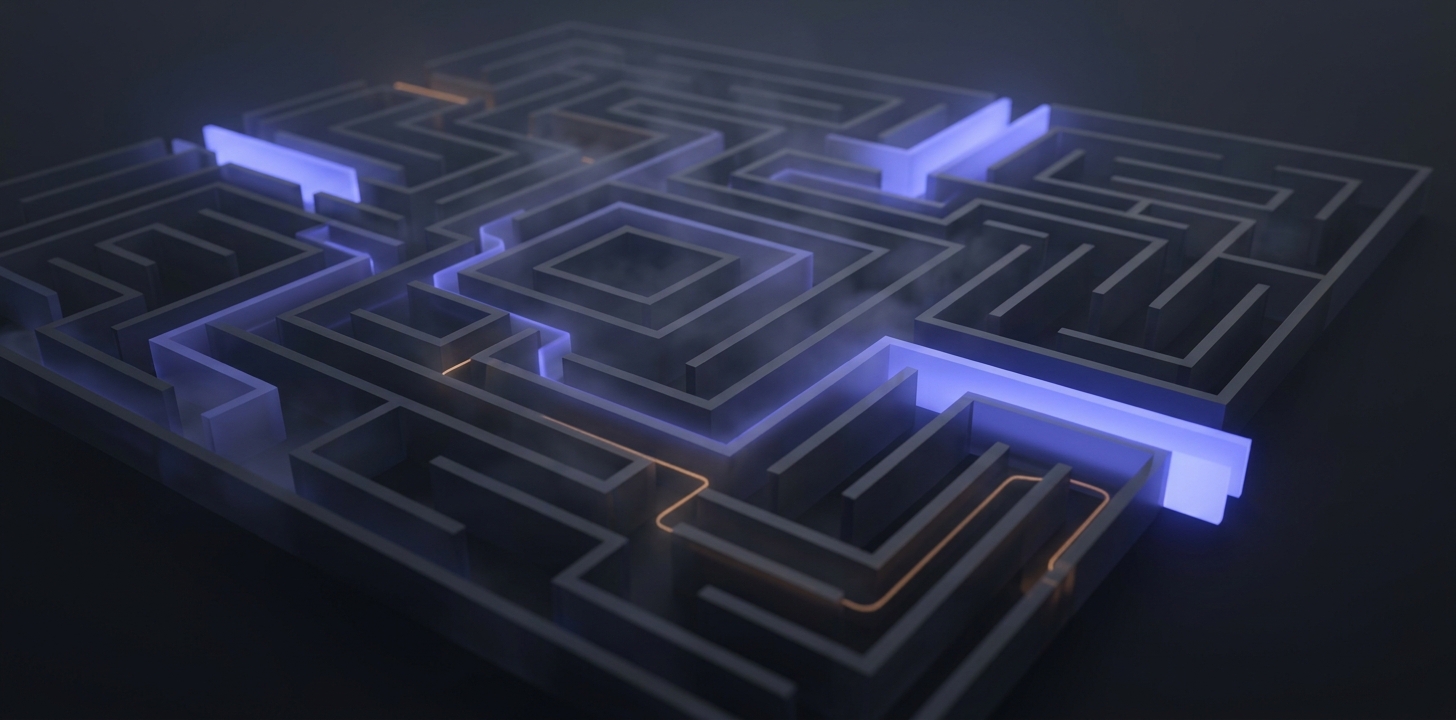

I find it useful to think of it as a labyrinth with many exits. Some exits are wider than others. The wider ones got that way because training data made certain responses statistically likely: certain phrasings appeared more often, certain reasoning styles were rewarded during alignment, certain structures dominate what "a helpful expert" sounds like.

The model follows the path of least resistance toward the widest exit. That is the entire mechanism. There is no separate process in the model that evaluates whether the exit is the right one. And so, no internal critic. As mentioned, this is mitigated by having multiple LLM agents to check each other output. Yet, what matters is that the output is the only fixed point, the single thing the whole system is optimised to produce.

The model follows the path of least resistance toward the widest exit. That is the entire mechanism.

When the correct answer requires a path the model's weights do not favour, the model does not stop because each node layer is another set of weights. There is no engine wrapping each node that can evaluate the correctness of the path chosen. More often than not, this mechanism leads to an exit that may not be the right one. Moreover, a group of layers and weights can easily force a way through, often generating what we call hallucination.

There are vastly more examples of confident answers in the training data than honest admissions of uncertainty. The wider exit is almost always a confident-sounding answer, right or wrong.

A bigger labyrinth does not mean understanding

The hope is that scale solves this with more parameters, more training data, and more compute. Surely at some point the labyrinth becomes large enough that it may give the impression that it understands like a real person.

Unfortunately (or fortunately), it does not, and this is worth arguing with for a moment. A larger matrix has more paths and more exits, and some of those exits are genuinely better which allow the model to get things right. It is real and measurable progress indeed. However, the fundamental mechanism is unchanged and the system still navigates by statistical weight toward the most probable output. With no internal representation of truth, no capacity to check its own reasoning against reality, and no working model of the world it is talking about, those trillion parameters make the labyrinth richer and more intricate but cannot provide eyes.

And here is where it gets interesting. When a language model appears to weigh alternatives, when it says something like "on one hand this, on the other hand that," what is actually happening underneath is surprisingly specific. The model committed to a high-probability trajectory within the first few tokens of its response, and everything that follows rationalises that trajectory: careful hedging, apparent consideration of opposing views, measured tone and so on. These are all features of the output distribution, patterns the model learned from millions of examples of how thoughtful experts write. They are beautifully shaped texts that mimic deliberation.1This behaviour is well-characterised in the alignment literature as sycophancy and mode collapse. RLHF, the reward shaping that makes models feel helpful, systematically rewards agreeable continuations. Perez et al. (Discovering Language Model Behaviors with Model-Written Evaluations, 2023) documented the pattern across model scales.

Try this yourself. Present a language model with options A and B when the genuinely best answer is D. It will almost always pick the closer of the two you offered, because producing D would mean rejecting your framing entirely. The alignment process, known in the field as RLHF,2RLHF stands for Reinforcement Learning from Human Feedback. After the initial training on text data, the model goes through a second stage where human evaluators rate its responses. The model then learns to produce outputs that score well with these evaluators, which in practice means it learns to be agreeable, helpful-sounding, and reluctant to challenge the user's framing. Effective at making models feel cooperative, but it systematically rewards fluent agreement over accurate disagreement. actively works against that kind of pushback. A model that constantly challenges your premises would feel adversarial and unhelpful, and the training optimises hard against that feeling.

So you end up with a remarkably articulate system that answers the question you asked, even when the more valuable move would be to tell you the question itself is wrong. Whether it has a hundred billion parameters or a trillion, this structural disposition stays the same. In my words, the labyrinth is bigger, more sophisticated and more capable but the wider exits are still the ones the training data has carved.

The domain-aware fallacy

The extraordinary coding success of LLMs has trained an entire industry to believe that fluency and correctness are naturally correlated. In coding, they often are. In most professional domains, they are dangerously not.

In law, the ground truth is precedent-shaped, jurisdictionally relative, and frustratingly contextual. Whether a contract clause holds up depends on which court would hear the dispute, what that court ruled last time, whether the regulation changed since, and what the other side will argue the clause actually means. There is no compiler to check the output and no test suite to validate the reasoning. The model produces fluent, professional-sounding legal text without any of the hard-won judgement that makes a legal opinion worth reading.

In accounting, the picture is similarly layered: correctness depends on which standard applies in which jurisdiction, what the materiality threshold is, when the transaction occurred, and how the auditor will interpret the standard, which is itself a judgement call, shaped by years of practice and professional exposure. The model produces text that looks like solid accounting advice. That advice will frequently be wrong in subtle ways that only a qualified accountant, or someone who has lived through audits and seen how standards actually get applied in the real world, would catch.

The assumption underneath most "domain-aware AI" pitches is seductively simple: swap the system prompt from coding to law, swap the tools from filesystem to document management, feed it legal documents instead of code, and you get a legal agent. It would be awesome, but what you actually get is a coding agent wearing a legal costume. Or the one that decomposes, pattern-matches, and pursues the widest exit through the labyrinth, now shaped by legal training data instead of code. The mechanism is exactly the same, however. With no access to the professional judgement it is so convincingly imitating.

What you actually get is a coding agent wearing a legal costume.

The gap between fluent and correct is where the real damage lives. The truly dangerous part is that it is completely invisible from the output alone.

What retrieval actually retrieves

The industry has converged on RAG, retrieval-augmented generation, as the fix for grounding. It is based on the assumption that if you give the model real documents, let it search through actual sources, and have it anchor its answers in verifiable references, you solve the grounding problem and get answers you can rely on. For simple, well-defined lookups this works well enough and can be genuinely useful.

For anything resembling real professional judgement, it falls short in ways that are worth understanding deeply.

Vector search finds documents that are textually similar to your question while graph search finds entities that are relationally connected to the concepts you mentioned. Both are valuable in their own right, can be genuinely useful, but represent the easy part of the problem.

What professional work actually needs is well beyond what any retrieval architecture can deliver. For example, a senior auditor or a practising lawyer need procedural knowledge, ie which steps follow which, in what order, with what deliverables expected at each gate. Moreover, constraint structures, which regulatory standards override which, the applicable thresholds, and exceptions are non trivial to capture and dissect. I can continue with authority hierarchies, their weights and ruling rankings, temporal reasoning, and regional applications.3Barnett et al. (Seven Failure Points When Engineering a RAG System, 2024) catalogued seven distinct failure modes in production RAG, several of which, particularly "wrong granularity" and "not in context," directly reflect the gap between textual similarity and domain reasoning.

Vector retrieval gives you "find me documents that mention X." Graph retrieval gives you "find me entities related to X." Neither one answers to "what should I do, in what order, subject to what constraints, with what authorities, given what is true today."

These are reasoning problems sitting on top of retrieval, and they require entirely different cognitive machinery that better embeddings and denser knowledge graphs cannot produce. And I say this having spent considerable time trying to make them work.

What happens in a real room

When a genuinely complex problem lands at a services firm, whether it is an M&A transaction, a cross-border restructuring, or a sensitive compliance investigation, what actually happens bears no resemblance to what any current AI architecture can do.

Multiple specialists, each with years of deep experience in their particular area, look at the same facts through fundamentally different lenses:

- M&A counsel reads the deal terms and sees a specific set of commercial risks.

- Tax counsel reads the same terms and immediately spots different exposures, ones the commercial lawyer was not even looking for.

- Regulatory counsel reads them again and identifies a third set of concerns entirely.

The final advice, the thing the client actually pays for, comes from the synthesis of all these perspectives, including the productive disagreement. There are moments where two specialists see the same clause differently, and the resolution of that disagreement creates an insight that neither of them had when they started. What makes this work is an instinct senior practitioners share, a defensive pessimism about narrow framings, built from years of having been wrong, that makes them automatically reach for options C, D, and E when someone confidently offers A and B.

A single language model, however powerful, has one prior and one reasoning style. Every time you run the same model you end up getting three versions of the same labyrinth. The words may differ but the structural limitations stay the same.4Wang et al. (ReConcile: Round-Table Conference Improves Reasoning via Consensus Among Diverse LLMs, ACL 2024) showed that heterogeneous model panels significantly outperform homogeneous ones, suggesting the value of the room comes from prior-diversity, which identical models cannot provide.

And there is a deeper layer still, one that I think matters more than any of the technical limitations I have described so far. When someone mentions the sun, a human mind does not perform a lookup. It activates, simultaneously and without effort, memories, geometric abstraction, gravitational physics, the beach last August, the moon and its pull, something about CERN and the beautiful lake a few tens of kilometres away and how much you like or dislike the weather. That extraordinary cascade of sensorimotor, episodic, aesthetic, and abstract associations, all firing at once and interfering with each other in unpredictable ways, is what produces hypotheses genuinely worth investigating.

This is exactly how a senior professional connects a contract clause to an obscure regulatory change from three years ago to a casual conversation from last week and arrives at something that was not contained in any of the individual inputs. Current AI systems retrieve things that are already known to be related. They do not generate new connections from the collision of different frames of experience. The entire field has built its architecture around retrieval as the dominant cognitive primitive, and retrieval, however sophisticated, is the wrong primitive for the kind of work these professionals actually do.

Your role is still safe

These systems make real work faster, and meaningfully so. I use them every day and my output is genuinely better for it. They are remarkably good at parsing what you mean, summarising what they find, and turning messy, unstructured input into clean, structured output.

Use them for exactly that. Use them to draft, to search, to translate between formats, to accelerate the parts of your work that are genuinely mechanical and repetitive.

But do not use them to make decisions. Do not let them replace the expertise, the knowledge, the hard-earned wisdom that comes from years of committed practice in a domain. Do not mistake the impressive fluency of the output for the professional judgement that your clients, your patients, your stakeholders are actually paying for.

The parts of your job that involve real judgement, deep domain knowledge, and the instinct that comes from years of seeing how things actually play out when the stakes are real, those are safe. A language model can tell you what similar contracts looked like. It cannot tell you whether this particular one is going to cause you trouble next year. That distinction matters enormously, and it is not going anywhere.

I fully expect LLMs to evolve. But knowing which exit actually matters, and knowing when the question itself needs changing before you walk through any of those "labyrinths", that is what expertise has always been. I do not see it moving from the room to the machine any time soon.